Regents Recap — June 2015: Cubics, Conversions, and Common Core

Here is another installment in my series reviewing the NY State Regents exams in mathematics.

One of the biggest differences between the new Common Core Regents exams and the old Regents exams in New York state are the conversion charts that turn raw scores into “scaled” scores.

The conversions for the new Common Core exams make it substantially more difficult for students to earn good scores. The changes are particularly noticeable at the high end, where the notion of “Mastery” on New York’s state exams has been dramatically redefined.

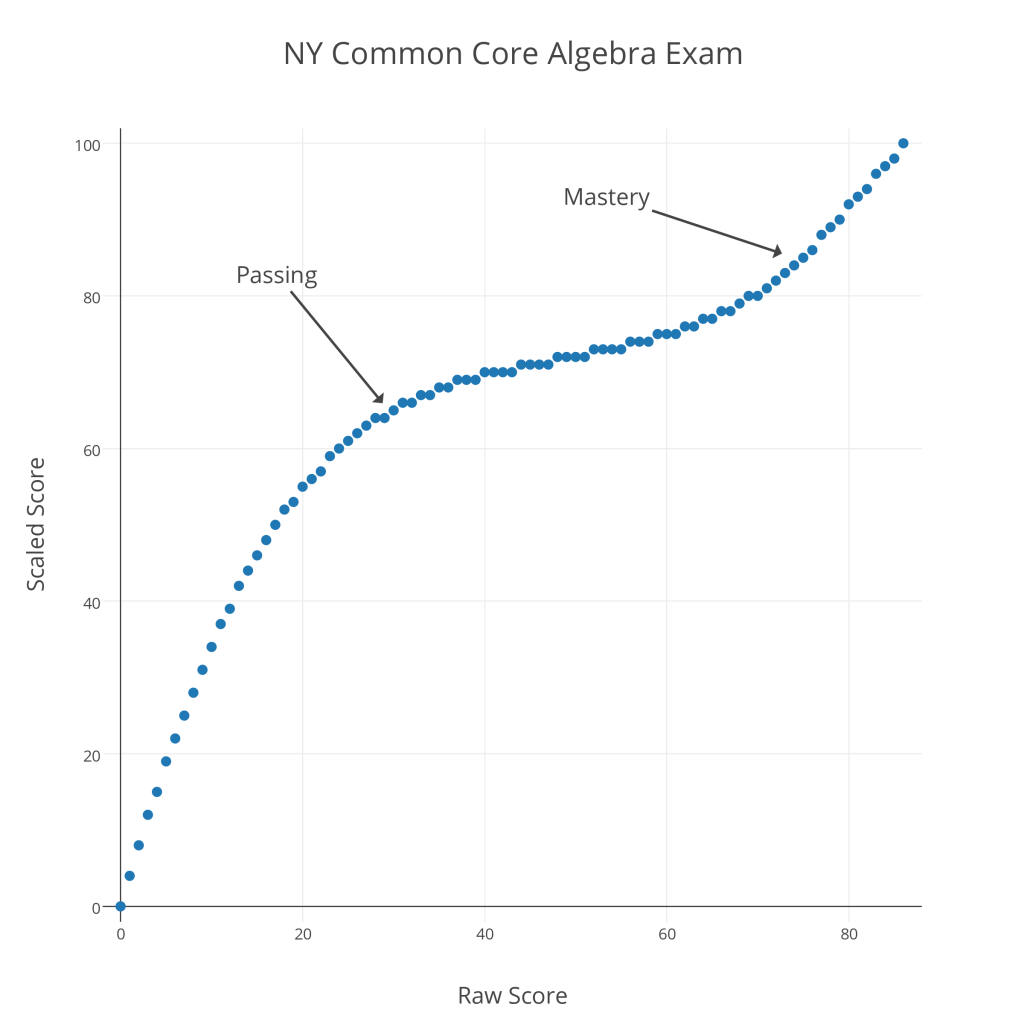

Below is a graph showing raw versus scaled scores for the 2015 Common Core Algebra Regents exam.

As with last year’s Common Core Algebra Regents exam, there is a remarkable contrast between “Passing” and “Mastery” scores. To pass this exam (a 65 “scaled” score), a student must earn a raw score of 30 out of 86 (35%); to earn a “Mastery” score on this exam (an 85 “scaled” score), a student must earn a raw score of 75 out of 86 (87%). It seems clear that the new conversions are designed to reduce the number of “Mastery” scores on these exams.

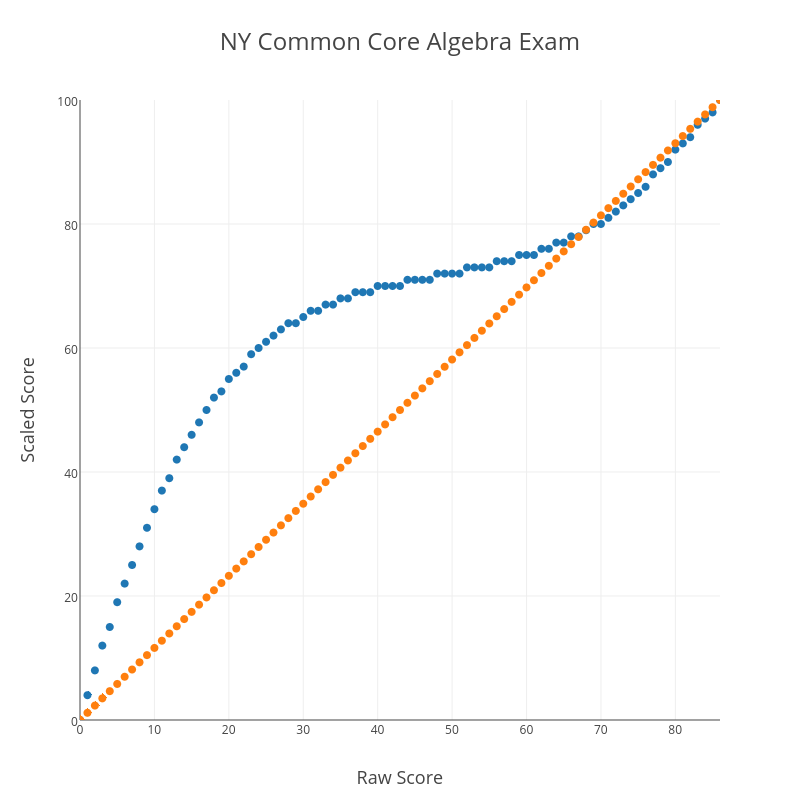

Another curious feature of this conversion chart is what happens at the upper end. Consider the graph below, which shows the CC Algebra raw vs. scaled score in blue and a straight-percentage conversion (87% correct “scales” to an 87, for example) in orange.

At the very high end, the blue conversion curve dips below the orange straight-percentage curve. This means that, above a certain threshold, there is a negative curve for this exam! For example, a student with a raw score of 82 has earned 95% of the available points, but actually receives a scaled score of less than 95 (a 94, in this case). I suspect there are people who will claim expertise in these matters and argue that this makes sense for some reason, but it certainly doesn’t make common sense.

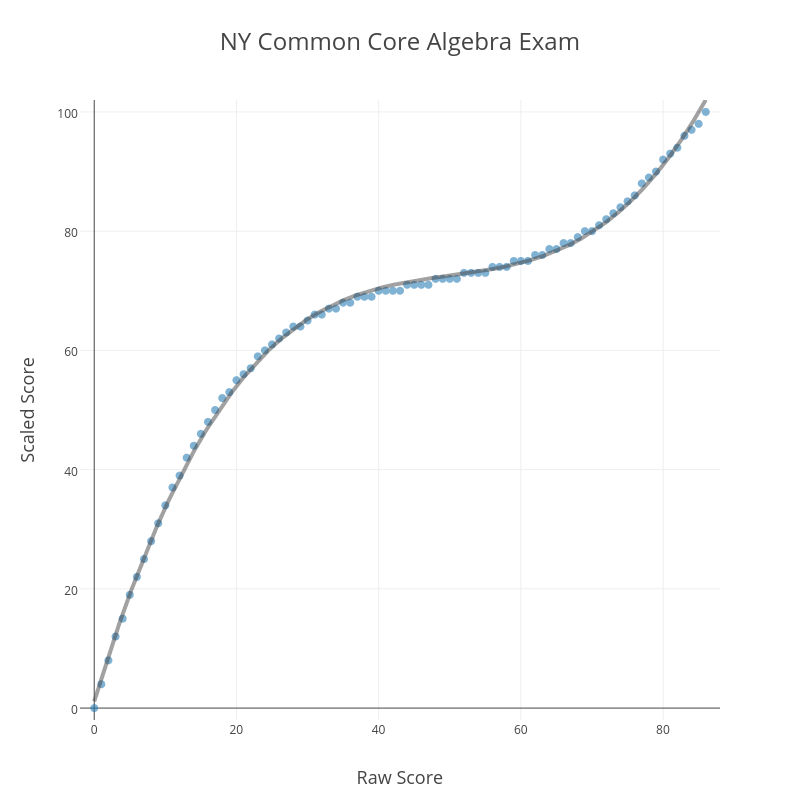

One final curiosity about this conversion. It’s no accident that the blue plot of raw vs. scaled scores looks like a cubic function.

Running a cubic regression on the (raw score, scaled score) pairs yields

That is a remarkably strong correlation. Clearly, those responsible for creating this conversion began with the assumption that the conversion should be modeled by a cubic function. What is the justification for such an assumption? It’s hard to believe this is anything but an arbitrary choice, made to produce the kinds of outcomes the testers know they want to see before the test is even administered.

These conversion charts are just one of many subtle ways these tests and their results can be manipulated. Jonathan Halabi has detailed the recent history of such manipulations in a series of posts at his blog. These are the kinds of things we should keep in mind when tests are described as objective measures of student learning.

Related Posts

- Regents Recaps

- Regents Recap — June 2016: Scale Maintenance

- Regents Recap — June 2014: Common Core Scoring

4 Comments

Jonathan Halabi · July 12, 2015 at 10:48 am

Patrick,

George Reuter from Geneseo, who worked on one aspect of this wrote “The cubic is also gone, and I don’t know everything about the function that fits the rest of the scores.” I thought he was mistaken, and since you seem to agree, I decided to look further.

Working backwards:

1. S(x) = Ax^3 + Bx^2 + Cx + D

2. (0,0), (30,65), (75,85), (86,100)

3. Use (0,0) to get D = 0, and forget both of them the rest of the way.

4.

86^3A + 86^2B + 86C = 100

75^3A + 75^2B + 75C = 100

30^3A + 30^2B + 30C = 100

System of three equations, three unknowns?

I used a calculator

A = 0.00045787330671051514

B = -0.07103966016756703

C = 3.885770495654214

And this is close, but not a match for the conversion chart. It’s off by 2 points in several places, so there’s no odd rounding of results. I tried rounding the coefficients. No dice.

But it sure feels cubic to me. I’d like to know what they are doing.

MrHonner · July 12, 2015 at 12:02 pm

I simply ran a regression on the (raw, scaled) ordered pairs. R^2 isn’t exactly 1, so it’s not a perfect fit, but it’s close enough. They are obviously starting from the premise that, for whatever reason, this conversion should look cubic.

Jason Mutford · September 25, 2025 at 9:34 am

Typo in your regression equation. You meant 0.076, not 0.76.

MrHonner · September 25, 2025 at 5:13 pm

Thanks! A remarkable catch.