Regents Recap — June 2014: Common Core Algebra, “Explain your answer”

Here is another installment in my series reviewing the NY State Regents exams in mathematics.

June, 2014 saw the administration of the first official Common Core Regents exam in New York state, Algebra I (Common Core). Roughly speaking, this exam replaces the Integrated Algebra Regents exam, which is the first of the three high school level math Regents exams in New York.

The rhetoric surrounding the Common Core initiative often includes phrases like “deeper understanding”, and the standards themselves speak directly to students communicating about mathematics. These are noble goals.

So, when it comes to the Common Core exams, it’s not surprising that we see directives like “Explain how you arrived at your answer” and “Explain your answer based on the graph drawn” more often.

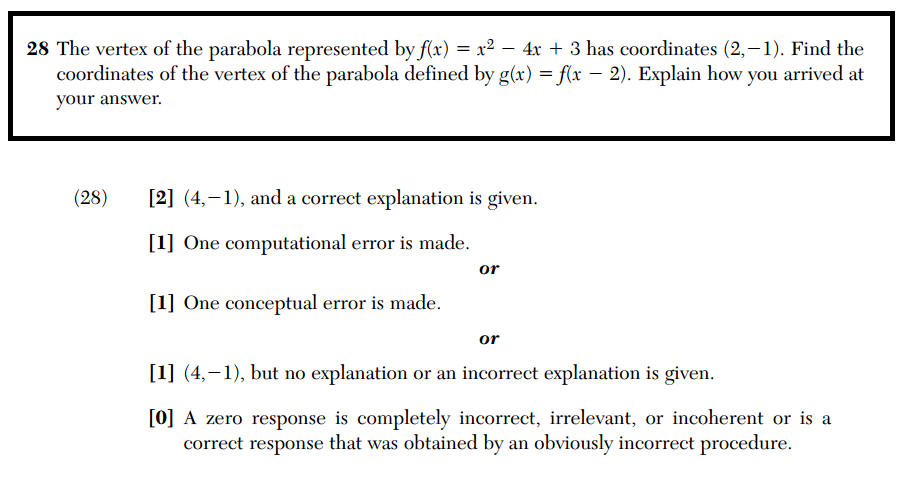

But including such phrases on exams won’t accomplish much if the way the student answers are assessed doesn’t change. Here’s number 28 from the Algebra I (Common Core) exam, together with its scoring rubric.

Notice that the scoring rubric gives no indication as to what constitutes a “correct explanation”. When scoring these exams, groups of readers are typically given a few samples of student work and discuss what a “correct explanation” looks like. But people grading these exams often have drastically different ideas about what constitutes justification and explanation. Given the importance Common Core seems to attach to explanation, I’m surprised that the scoring rubric takes no official position here. In fact, this rubric is essentially identical to those used for the pre-Common Core Integrated Algebra exam.

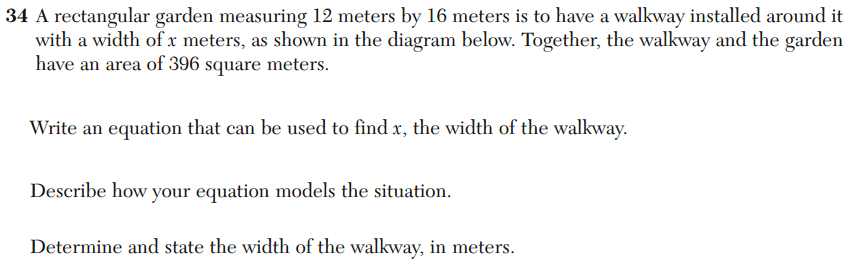

There’s a real danger in simply tacking on generic “Explain … / Describe …” directives to exam items. Consider number 34 from the Algebra I (Common Core) exam.

It’s not really clear to me what a valid response to the directive “Describe how your equation models the situation” would look like. Nor an invalid response, for that matter. So what do students make of such of a directive? I suspect that, for many, it just becomes another part of the meaningless background noise of standardized testing, another place where they simply have to guess what the test-makers want to hear. And according to the rubric, the test-graders will have to guess, too.

Yes, students should be communicating about mathematics, their processes, and their ideas. But just adding “Explain how you got your answer” to a test question isn’t going to do much to help achieve that goal.

Related Posts

10 Comments

Amy Hogan · September 6, 2014 at 12:12 pm

I think you are echoing what I find most troubling about these types of rubrics and their vagueness. The language and the lack of a unified grading system means that this actually promotes inconsistency in grading these types of questions. If a standardized exam is really desired, the testing committee needs to ensure that teachers grading in Brooklyn will grade a question in the same way teachers in Buffalo would grade it. That’s obviously not happening here.

Dave Marain · September 7, 2014 at 1:04 pm

Agreed.

How many decades of open-ended questions like these have been around and still we’re discussing quality. Since the 80’s I’ve argued that open-ended does not = vagueness and ambiguity.

What we need to do is rewrite the question, i.e, “model” it for them!

Here’s a stab at it but I know others could do better…

(a) Show that the width of the walkway is not 4 meters.

(b) Express the dimensions of the overall figure (garden and walkway) in terms of x, the width of the walkway.

(c) Write an equation that can be used to solve for x.

(d) Solve the equation and state the value of x.

Sendhil Revuluri · September 8, 2014 at 3:44 pm

Patrick, as usual, your point is well-taken. I’ve had the same concerns since I was scoring Regents exams. I would say that there are assessments — even with MORE open-ended items — that do a better job of ensuring consistent scoring (which is borne out in their reliability). (See, for example, the resources on MARS assessments at http://www.insidemathematics.org and http://svmimac.org.) This isn’t easy, and involves hard work in design, calibration, and scoring (and sometimes gray areas and arguments).

My caution is that we must keep some balance in mind. If we push too hard for precision – or in assessment terms, reliability – we may sacrifice validity: actually assessing deeper thinking. (Or at least this has often ended up being the case with the constraints of finite budgets.) Certainly items should be good, but I worry about beating on them for absolute imperfections without keeping the relative tradeoffs in mind. Similarly, pushing for better items is in tension with releasing them each year (which is a great resource for teachers and students, but which means you have to make new ones for next year).

I entirely agree that having such items on the “big tests” is not “the whole game” – but it’s at least a start. Teachers definitely look at items from large-scale tests, and they influence instruction. The process should get scrutiny and be pushed to improve, but we should also ensure that we don’t set such a high bar that open-ended items are driven out, leaving just multiple choice items standing.

Kenneth Tilton · September 8, 2014 at 5:02 pm

[Hoping there are still no dumb questions…]

As long as examiners are reading natural language explanations (ie, not going by multiple choice) why not just have the examinees write out their math solutions and score those?

btw, do we have sample answers with their scores from the test manufacturers?

MrHonner · September 8, 2014 at 6:48 pm

As a practicing teacher whose primary mission, and now performance evaluations, are both directly tied to standardized tests, it’s not my obligation to patiently wait for these exams to get better. New York state has been issuing exams for nearly 100 years. How much more time is needed to get it right?

Nor do I feel compelled to somehow protect the promise of assessment. If these tests can’t deliver on the promises test-makers are making, then students shouldn’t be taking them and my employment shouldn’t be contingent upon them.

Kenneth Tilton · September 8, 2014 at 3:55 pm

“Yes, students should be communicating about mathematics, their processes, and their ideas.”

On the exam? I can see how it helps some learn to do mathematics, but if doing math is our goal should we not be testing exactly that?

Maybe I am too “old school”, but I think it dangerous (and hard to score!) to get away from testing the pure maths.

When do the scores come out? 🙂

Dave Marain · September 8, 2014 at 5:37 pm

Patrick et al…

My direct experience with open-ended questions on state assessments is that often vague ambiguous questions are developed by some testing company and students struggle to answer it even if they know the concept/skill. In the scoring and item review process, considerable time is spent determining how to evaluate unanticipated student responses. Of course open-ended should lead to a variety of student approaches. The problem is when students are unable to demonstrate their knowledge because the semantics are too confusing. Programmers all know what this phenomenon is called – “garbage in, garbage out!” Teachers need numerous high-quality model problems to use as a basis for implementing the Core. But many released items are not of this caliber. The rewrite of the question which I offered could be criticized for being multi-part but not really open-ended. I did that intentionally to provoke a reaction. Having done the same here in NJ while serving on various state assessment committees and receiving the same non-reaction is what I’ve come to expect. Generic discussions of the pros and cons of open-ended questions will not lead to improvement of quality. That will only happen when highly knowledgeable educators such as the respondents here attempt to write or rewrite their own open-ended questions assessing particular standards. Writing such high-quality questions is highly challenging but then the discussion becomes authentic…

Arpi Lajinian · September 13, 2014 at 9:25 am

I agree.

It is during active class discussions that our students learn to use acceptable mathematical language and develop an understanding of mathematics. Thoughtfully crafted T/F, or “always true, sometimes true, never true” statements with or without prompts like “give an example, a counter example, justify, or explain” have helped me gauge students’ understanding on written assessments.

If well written, these types of questions could offer an alternative to the often unnecessary “Explain” that appear in some sample Common Core questions.

MrHonner · September 13, 2014 at 7:33 pm

I agree that T/F and A-S-N questions can be well-designed and function as excellent student-to-student and whole-class discussion starters. But a virtue of such questions is that they provoke legitimate argument about definitions and assumptions; this is a liability on a standardized exam.

For example, a question like

Two lines either intersect once or are parallel

is a great argument starter in a classroom. Are two conincident lines really just one line? Are we in the plane or in space? Are we assuming the Euclidean axioms? This grey area is great for a classroom, but terrible on a test.

And asking for examples and counterexamples takes great mathematical sophistication, in both posing and evaluating. I think a fitting summary of my 50 or so posts evaluating the NYS Math Regents exams over the past several years is that this mathematical sophistication is terribly lacking in this process.

Kenneth Tilton · September 13, 2014 at 8:44 pm

You all are reminding me of a philosophy professor who managed to pull off multiple choice tests. They were tough, and I doubt anyone successfully argued for an answer other than the one demanded. Each choice alone required a solid understanding of the material and the making of careful distinctions.

The main problem was how hard it was to make these tests. He could not just toss off a new one each semester, and they did get purloined/passed around.