Regents Recap — June 2013: Where Do Circles Belong?

Here is another installment in my series reviewing the NY State Regents exams in mathematics.

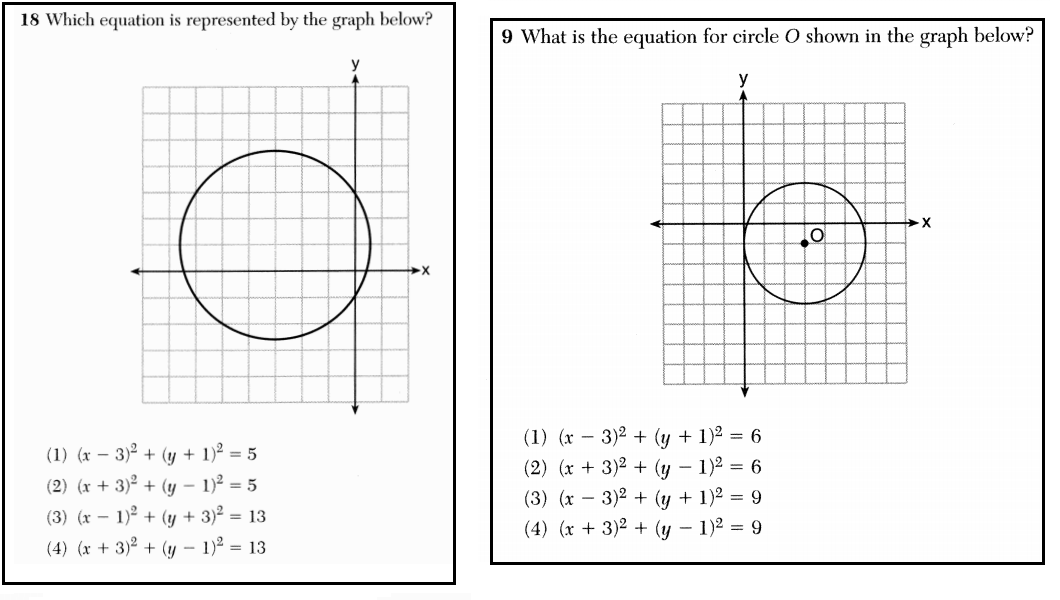

Here are two questions from the June 2013 exams.

These questions aren’t particularly interesting: both give the graph of a circle and ask for its equation. Since the questions are nearly identical, it would be strange to see them on the same exam. It is even stranger, then, to see them on two different exams: one was on the Geometry exam, the other the Algebra 2 / Trig exam.

Why does the same kind of question appear on the terminal exams of two different, sequential courses? Are students supposed to learn this topic in Geometry, or in Algebra 2 / Trig? The test-makers don’t seem to know, which calls into question the fairness of these exams.

And the question of fairness has implications for teachers as well as students. Student exam scores now constitute a substantial component of a teacher’s yearly evaluation. If students are supposed to learn how to find the equation of a circle in Geometry class, is it fair to use such a question to evaluate an Algebra 2 / Trig teacher?

The fact that test-makers don’t know which topics belong in which courses raises some serious questions about the validity of using these test results to evaluate teacher performance. In addition, one wonders how facing the same question on sequential exams impacts student growth measurements so popular among educational policymakers nowadays.

Unfortunately, this is only the latest in a long line of inappropriate questions on math Regents exams.

8 Comments

Ed Jones · July 8, 2013 at 11:16 am

Patrick, even considering how long its been since I did equations like this, I was surprised at how hard I found it to solve. Just goes to show how fast unused muscle degenerates.

Nonetheless, once I was extremely good at such questions. And, if I have anything to contribute today in the world of words and ideas, it’s because of the discipline I learned solving problems like these and math far more advanced.

I don’t follow your logic here.

The point of the tests is to see if enough students are being readied for college and technical work, and for citizenship in a world that runs on a highly mathematical foundation. Where half our days are spent with devices hanging narrowly on the magic of quantum mechanical tunneling. A world where philosophy and even religion itself run up against things like the Uncertainty Principle and the expansion or contraction of the universe.

The two questions above present an easier and a slightly harder version of the same concept. On one, the circle does not cross the x axis at an integer. On the other it does. Also x takes no positive integer values in the left graph.

A student given merely one of these might with incomplete knowledge guess the correct answer to one, but likely not both. I’d guess the designers put them both there first to give some students confidence, second to test that all the concepts involved are thoroughly exercised.

As to which test to put them on, I didn’t even know that this shouldn’t be on the Algebra I test. Again its been awhile, but that was my expectation.

Even so, an Algebra 2 student certainly should know it. As, I think, should a half-decent geometry student. If you’re teaching Algebra II and your students don’t know this, I’d say that remedial work is part of your job description.

The logic of your post would have these concepts as separated as Egyptian and US Colonial history. They’re much more tightly integrated than that.

Of course, the above questions are two of a much larger set of questions that I’ve never seen. No doubt there are improvements to be made.

For myself, I’d rather see terminal exams like these completely abolished in favor of competency-based work that transparently assesses that the student has accomplished the work every day of his career. The Khan Academy exercises on steroids, if you will.

Until we have that, though, we need some sort of terminal exams to counter bureaucratic stagnation of huge districts, the political/legal and self-interested handiwork of the NEA and AFT, and the funding shortages that have long afflicted so many of our schools.

While the above hasn’t made a great case, I’m glad people like yourself take checking the tests seriously!

MrHonner · July 8, 2013 at 12:25 pm

Ed-

These tests are not designed to see if “enough students are being readied for college and technical work”. They are designed to see if students have mastered the content of a specific course. Were that not the case, it would serve as an even greater indictment of the use of these test results as a measure of teacher effectiveness.

Of course all teachers should remediate when necessary, but keep in mind the teacher and student will always have a full curriculum of new material to “cover”. If they don’t, both the teacher and student can suffer.

I, and most teachers I know, welcome transparent assessment of students, provided the stakes are appropriate and we can be guaranteed quality exams and consistent, accurate scoring. But as my many posts on the subject suggest, we aren’t getting quality tests. And we aren’t getting accurate scoring. And keep in mind, as more and more testing gets handed over to private enterprises, they process will become less and less transparent.

Ed Jones · July 8, 2013 at 3:25 pm

‘ as more and more testing gets handed over to private enterprises, they process will become less and less transparent.’

Well, that’s an ideological perspective, isn’t it?. There’s no law of the universe that says that people who work for government are more honest/motivated/intelligent/generous than people who work for the private sector. (No more than it would be true that people who work for the government are lazy, incompetent, etc.).

People are people. Most are good; a few are bad, the middle are middling.

MrHonner · July 8, 2013 at 3:44 pm

No, it is not an ideological perspective, and it has nothing to do with the nature of people. It has to do with the transparency of the process.

NY State Regents exams are made public shortly after the tests are given, as are the scoring rubrics. Full archives of past exams are made freely available. http://www.nysedregents.org/regents_math.html.

Private testing entities keep tight control over their content, which is generally covered by copyright. They do not make exams publicly available after the fact, and thereby avoid the kind of scrutiny undertaken here.

Ed Jones · July 8, 2013 at 4:06 pm

OK, I see what you were thinking.

Still, the State has every right to place in the contract that exams will be made public. Companies which do not wish to do so need not reply.

MrHonner · July 8, 2013 at 4:21 pm

Yes. I wish they would do that.

CCSSIMath · July 8, 2013 at 4:07 pm

NYSED hasn’t posted the 6/13 exams on their website yet, but we’ve been champing at the bit to see several questions we’ve heard about. Does NYSED have a specific policy (read: gag order) regarding beating them to the publishing punch?

That there was an acknowledged defect in question #24 on this year’s Geometry exam and there were also acknowledged defects in Geometry, 8/2012, and Algebra 2/Trig, 1/2012, it seems that errors are becoming more commonplace, not less, the opposite of what you’d expect from exams that have been given in basically the same format arising from the same syllabi for years.

And these are only the blunders so severe that credit had to be issued to all students, not the usual ambiguities, poor formatting, questionable placement decisions (the issue in your posting), etc., that NYSED won’t own up to.

It also occurred to us that tossing out bad questions is now a mixed blessing for students (and teachers). Once upon a time, before scaling, if a question’s defect was acknowledged and credit given to all, it could only increase the pass rate, and NYSED had to eat crow.

Nowadays, while it seems such acknowledgments continue to benefit students, that’s not necessarily so. Even though all students get credit for such defective questions, it’s possible NYSED, in its secret meetings, concurrently raises the passing cutoff and/or changes the scale. Those who would have gotten the question wrong may improve their standing, but those who got it right anyway may suffer from a raised baseline, with the net effect on overall pass rates not clear.

In other words, NYSED may no longer be accountable to anyone for its blunders.

Finally, there’s no mea culpa from NYSED regarding the psychological effects that bad problems have on students; what do you do about the student who struggles with a defective question, knows the question is bad, and is only met by “do the best you can” from an exam proctor? Psychologists might argue that the fallout may extend past the question and affect a student’s psyche for the entire exam.

MrHonner · July 8, 2013 at 4:30 pm

I’m not sure what the hold-up is regarding posting the exams to the official NYSED website, but the exams can be found here: http://jmap.org/JMAP_REGENTS_EXAMS.htm.

There are a number of serious issues (that I will cover in upcoming posts), including those you mentioned. And it surprises me, too, that these incidents are not becoming any less frequent, as evidenced by how many posts I continue to write on this theme.

I worry too about the psychological effect these kinds of problems can have on test-takers. As I wrote here, detailing one of the most embarrassing math questions I’ve ever seen, the effect of such a problem may well extend past two points.